What is Guided Backpropagation?

Guided Backpropagation (GBP) [3] is an approach designed by Springenberg et al., relying on the ideas of Deconvolution [1] and Saliency [2]. Authors argue that the approach taken by Simonyan et al. [2] has an issue with the flow of negative gradients, which decreases the accuracy of the higher layers we are trying to visualize. Their idea is to combine two approaches and add a “guide” to the Saliency with the help of deconvolution.

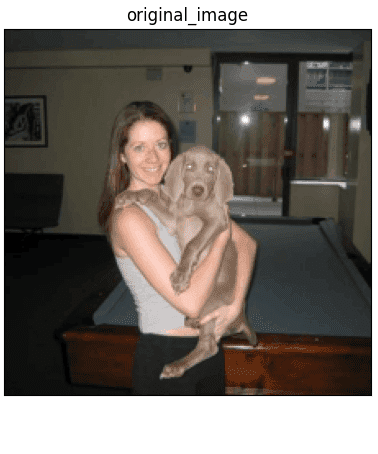

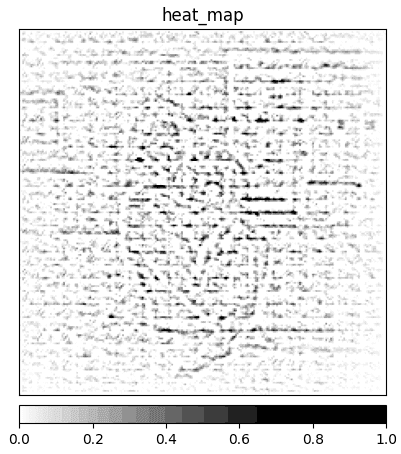

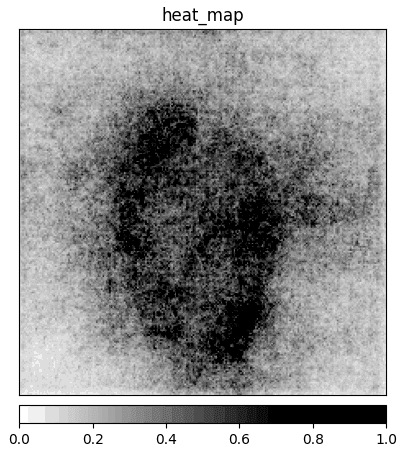

To achieve that, we have to focus on the ReLU activation function in the CNN. When computing values at the Rectification component of the deconvnet, we are masking all non-positive values with the ReLU. In that layer, the computed values are calculated only base on the top signal (reconstruction from the upper layer), and the input is ignored. On the other hand, in the Saliency method, we are focusing on the gradient values computed base on the input image. If we take deconvnet masking of the Rectification layer and apply it on the gradient values of the Saliency method, we could remove noise caused by the negative gradient values. This noise removal is the reason why the method has the prefix “guided”. Deconvolution guides backpropagation values of the Saliency method to produce sharper images (Fig. 1c).

As we can see in Figure 1c, the use of the “guide” significantly reduces the amount of noise generated by the Saliency method (Fig. 1d). The idea of GBP is often misunderstood and interpreted as “applying deconvolution results on the saliency results”. This is not true because ReLU masking extracted from the deconvnet is applied on every level and therefore affects the gradient values all the way down to the input of the CNN, not only at the first level of the CNN.

Further reading

I’ve decided to create a series of articles explaining the most important XAI methods currently used in practice. Here is the main article: XAI Methods - The Introduction

References:

- M. D. Zeiler, R. Fergus. Visualizing and Understanding Convolutional Networks, 2013.

- K. Simonyan, A. Vedaldi, A. Zisserman. Deep inside convolutional networks: Visualising image classification models and saliency maps, 2014.

- J. T. Springenberg, A. Dosovitskiy, T. Brox, M. Riedmiller. Striving for Simplicity: The All Convolutional Net, 2014.

- A. Khosla, N. Jayadevaprakash, B. Yao, L. Fei-Fei. Stanford dogs dataset. https://www.kaggle.com/jessicali9530/stanford-dogs-dataset, 2019. Accessed: 2021-10-01.